Java is required for both Elasticsearch and Logstash. Install ELK dependenciesĪccess your VPS and run the following commands as a sudo user to install required dependencies: Java

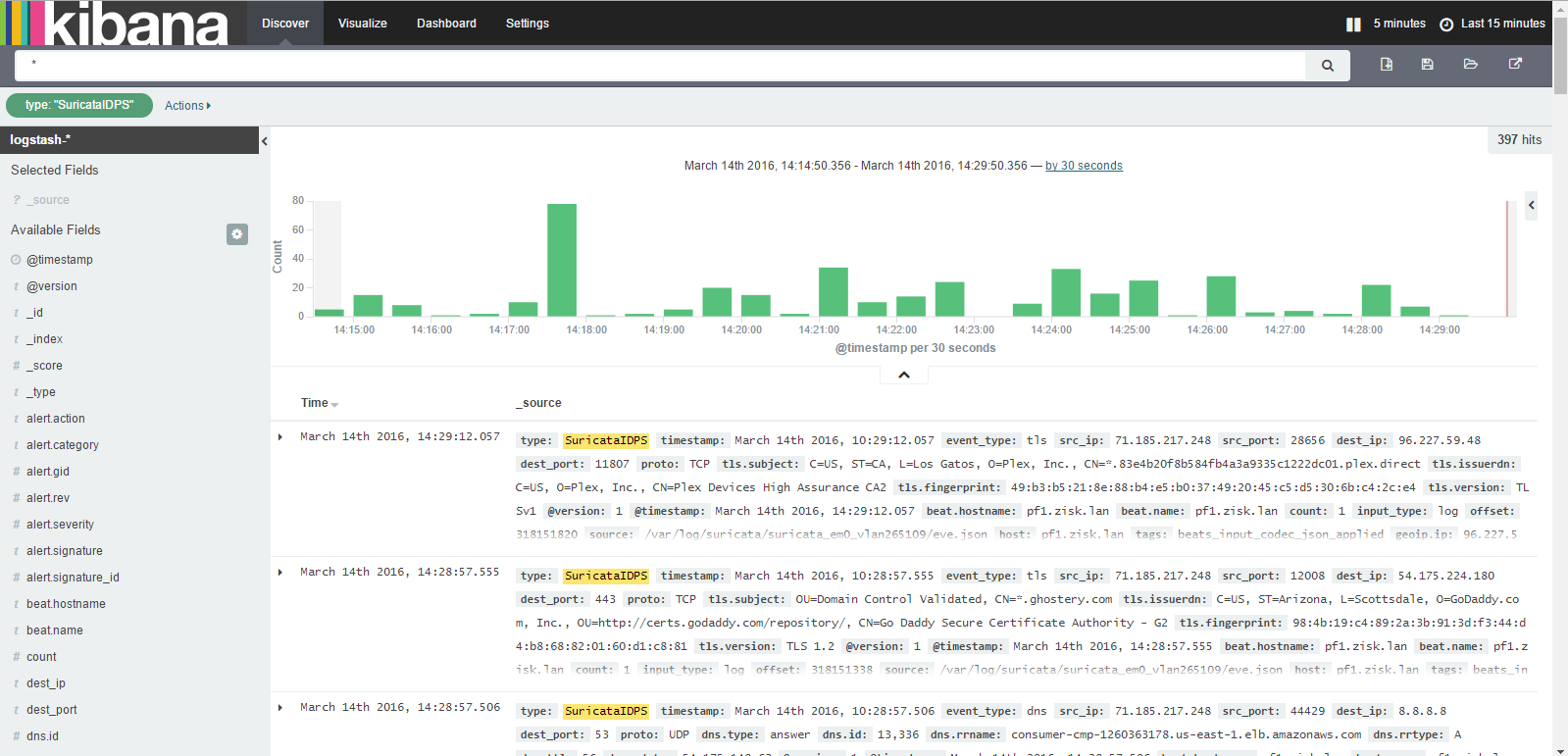

With my current amount of traffic log data 4GB RAM is enough so far. It is running Elasticsearch, Kibana and Logstash processes. If you use Cloudflare for your DNS remember not to use their CDN for this domain because it changes IP domain resolves to and can cause trouble with setup.įor my ELK stack server, I use a 4GB Digital Ocean VPS with Ubuntu 18.04. You will also need a domain or a subdomain you will config with your VPS server IP using an A DNS entry. I don’t elaborate on how to do it in this tutorial. You need to start with purchasing a barebones VPS and adding SSH access to it. This is the eBook that I wish existed when I was first tasked with moving the Heroku database to AWS as a developer with limited dev ops experience. Just to show you a sneak peak of what we will be building:Ĭurrently, I am using Kibana to analyze traffic logs of this blog and Abot for Slack landing page. Check out the release notes for the current ELK version and potential breaking changes. This step by step tutorial covers the newest at the time of writing version 7.7.0 of the ELK stack components on Ubuntu 18.04. We will also setup GeoIP data and Let’s Encrypt certificate for Kibana dashboard access. NGINX logs will be sent to it via an SSL protected connection using Filebeat. No need to be a dev-ops pro to do it yourself.ĮLK stack will reside on a server separate from your application. By following this tutorial you can setup your own log analysis machine for a cost of a simple VPS server. Hosted solutions are a bit pricey with monthly costs starting around $50 for a reasonable features set. I don’t dwell on details but instead focus on things you need to get up and running with ELK-powered log analysis quickly.Ĭomparing to other tools available ELK gives you extreme flexibility in terms of ways to analyze and present your logs data. In this tutorial, I describe how to setup Elasticsearch, Logstash and Kibana on a barebones VPS to analyze NGINX access logs. It supports all commonly used parsers like json, nginx, grok etc.A leading-edge performance and error monitoring tool for Ruby applications.ĮLK Elastic stack is a popular open-source solution for analyzing weblogs. Best part is both can co-exist in same environment and can be used for specific use cases.įor monolithic applications on traditional VMs, Logstash looks like a clear choice and way to proceed as it supports multiple agents for collection of logs, metrics, health etc.įor microservices hosted on Docker/Kubernetes, Fluentd looks like a great choice considering built in logging driver and seamless integration. Looking at the above use cases, it should be clear that both Fluentd and Logstash are suitable for certain requirements.

Logstash has http plugin (supported by elastic) which provides capability to pull data from http endpoints. Scrapingįluentd has plugin http_pull which provides capability to pull data from http endpoints like metrics, healthchecks etc. Additionally Logstash can also scrape metrics from Prometheus exporter. Logstash uses Metricbeat which has out of the box capability to collect system/container metrics and forward it to Logstash. It can however scrape metrics from a Prometheus exporter. Logstash has more number of plugins for filtering and parsing like aggregate, geoip etc in addition to the standard formats.įluentd doesn’t have out of the box capability to collect system/container metrics. Log Parsingįluentd has standard built-in parsers such as json, regex, csv, syslog, apache, nginx etc as well as third party parsers like grok to parse the logs. The logs from file then have to be read through a plugin such as filebeat and sent to Logstash. Logstash – The application logs from STDOUT are logged in docker logs and written to file. Logs are directly shipped to Fluentd service from STDOUT and no additional logs file or persistent storage is required.Įxample- Add below section to the service in docker compose file- logging: This means no additional agent is required on the container to push logs to Fluentd. Lets looks at both from Use-Case perspective – Use Cases Log Collectionįluentd – Docker has built-in logging driver for Fluentd.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed